In brief: As gaming platforms face growing pressure to protect minors and prove those controls work, a simple age gate stops being enough. Teams need to decide which surfaces actually require age assurance — chat, DMs, mature content, purchases, or UGC — then choose the method, fallback, and trigger point for each one.

Age verification, once associated mostly with gambling and other adult-only services, is now reaching mainstream video gaming. Roblox, for example, now requires age checks for access to chat, while platforms such as Steam, PlayStation, and Xbox have introduced age-assurance flows for minors in regulated markets.

This article looks at how age verification is entering non-gambling gaming, where it is already being used, and what gaming platforms should consider before rolling it out.

Why is age verification the main identity use case in gaming?

Age verification has become the dominant identity use case in video gaming as platforms face growing pressure to prove that child safety controls go beyond self-reported dates of birth. That pressure is increasingly coming from regulators.

In the UK, that pressure is already changing product decisions: Ofcom says more gaming services are introducing age checks as the Online Safety Act (OSA) rolls out, backed by fines of up to £18 million or 10% of qualifying worldwide revenue. In the US, the FTC’s 2026 COPPA policy statement explicitly encourages the use of age-verification technologies in child-safety contexts. In the EU, the Commission’s age-verification work is tied to protecting minors online and supporting the Digital Services Act.

For now, gaming platforms are not being pushed toward full KYC but rather toward stronger age-based access controls: who can interact, what spaces users can enter, and which titles or content they can access.

What gaming platforms are already enforcing age verification?

Several major gaming platforms already use age checks to control access to chat, mature content, or certain account features. However, the methods vary. Some platforms rely on facial age estimation, some offer ID-based checks, and some use credit card validation or parental consent flows.

The UK currently has the clearest officially documented platform-wide age-verification rollouts, but age checks in gaming aren’t limited to the UK. Roblox applies them more broadly as well as Epic Games uses cabined accounts and parental consent across markets.

| Platform | Use case | Method | What verification affects | Popular titles affected |

|---|---|---|---|---|

| Epic Games | Parental consent and youth account restrictions | Cabined accounts and parental consent | Purchases, chat, account features for younger users | Fortnite, Rocket League, and Fall Guys |

| PlayStation (UK) | Region-driven platform-wide controls | Mobile provider check, facial age estimation, or ID verification | PEGI 18 buys, new adult accounts | Platform-level impact rather than title-specific |

| Roblox | Age-based communication controls | Facial age estimation or ID verification | Chat, trusted connections, age-based experiences | User-generated experiences, such as Brookhaven RP, Adopt Me!, and Dress to Impress |

| Steam (UK) | Mature-content gating at the storefront and community levels | Credit card check | Mature game access | Mature titles and related store/community content, such as Grand Theft Auto V, The Witcher 3, and DOOM Eternal |

| Xbox (UK) | Region-driven platform-wide controls | ID verification, facial age estimation, mobile provider check, or credit card check | Social features, mature games | Platform-level impact across multiplayer and social titles, including Call of Duty, Halo Infinite, and Sea of Thieves |

Get posts like this in your inbox with the bi-weekly Regula Blog Digest!

What gaming platforms are actually defending against?

Age verification for gaming platforms goes beyond blocking kids who lie about their birthday. That is only the most obvious problem, and often the easiest one to spot.

The real challenge starts when age controls have to hold up against actual misuse. A minor may use an adult’s face or ID to unlock chat, enter mature spaces through a borrowed account, or keep trying until something works. That is the technical side of the problem:

-

spoofed selfies,

-

replayed faces,

-

borrowed credentials,

-

adult verification completed on behalf of a minor,

-

and repeated bypass attempts.

The product risk is what comes next:

-

Underage users may gain access to mature content, including PEGI 18 titles, adult-rated lobbies, or user-generated experiences with explicit themes.

-

Weak age controls can also leave public chat, social discovery, or direct communication features open to unsafe contact between adults and minors.

-

Purchase flows create another risk: younger users may use stored parental payment methods or exploit weak account controls around age-sensitive transactions.

That’s why weak gates do not solve much. They create the appearance of control without making the control hold up in practice, while moderation and support teams inherit a problem the verification flow was supposed to reduce.

Where age checks belong in a gaming platform?

Not every part of a gaming platform needs the same level of proof. The better approach is to apply age checks where the business risk is real: chat, private contact, mature content, purchases, and user-generated spaces.

| Product surface | Business risk | Lightest workable method | When stronger proof is needed |

|---|---|---|---|

| Public chat | Unsafe contact between adults and minors, moderation burden, reputational risk | Facial age estimation or age-based account status | Inconclusive estimate, repeat fails, or tied to reports/abuse |

| Direct messages / friend requests | Higher grooming and harassment risk, with less visibility for moderation teams | Keep friend requests/DMs off by default for younger users or unknown-age users | Repeated safety reports and user complaints |

| Mature store pages or titles | Underage access to restricted content, policy violations, regulatory exposure in some markets | Credit card or age-assurance check | If regulation or local policy requires stronger proof |

| Creator / UGC spaces | Exposure to explicit or age-inappropriate content, moderation burden | Age-based routing, access tiers, or restricted discovery | Adult-oriented uploads or entry to sensitive communities |

| In-game purchases | Unauthorized minor spending, chargeback spikes, parental disputes, policy risk | Account-level age gate, stored payment signal, or parent approval | On the first purchase, when purchase value is high, disputes repeat, the stated age is disputed, or the account shows repeated youth-flagged transactions |

Why is age verification in gaming hard to implement well?

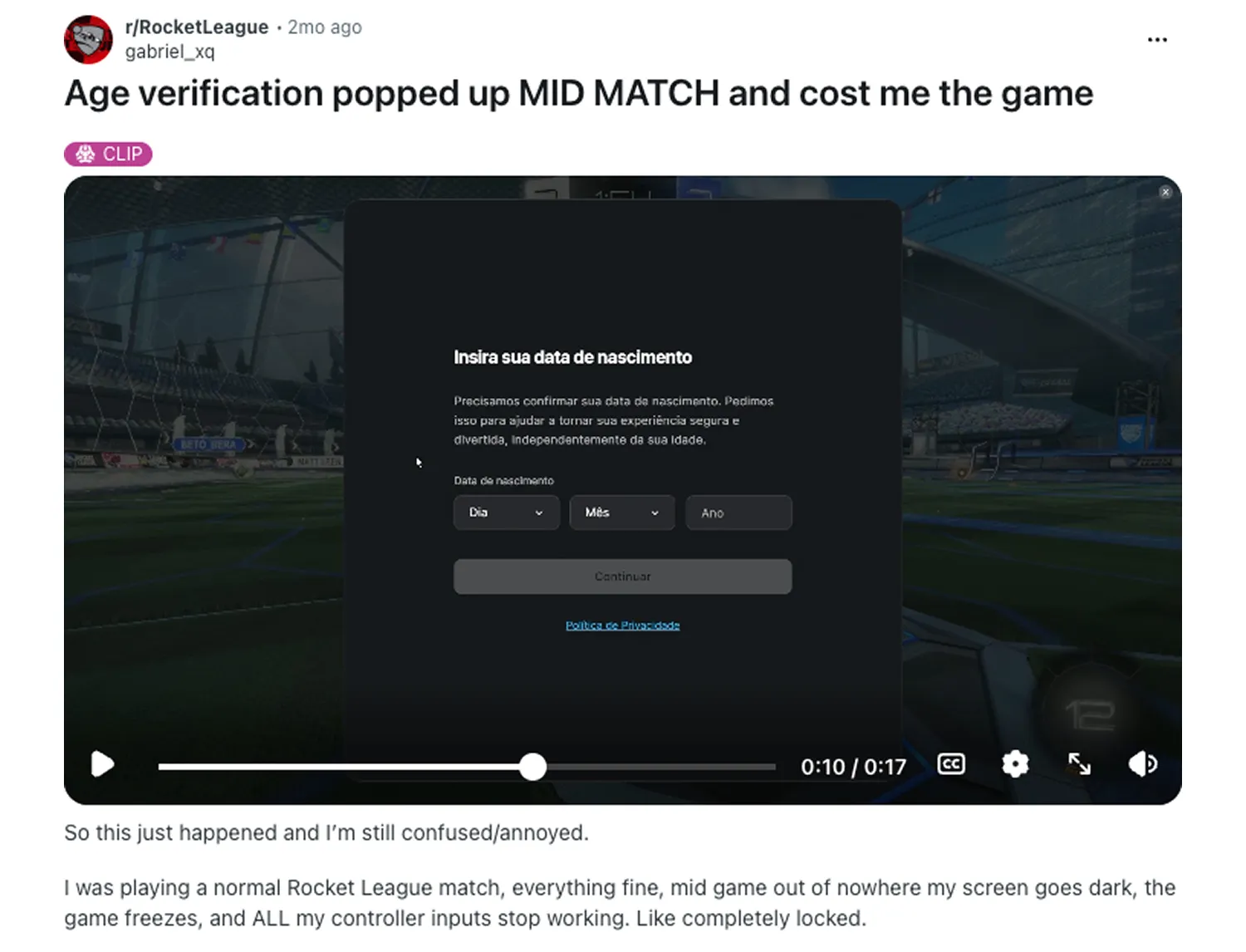

Given the immersive nature of gaming, age verification here needs to be treated as product design, not just policy enforcement. In practice, age-verification flows usually fail in one of four places:

-

The check appears at the wrong moment. A gate can feel broken even when the policy behind it is valid. If it interrupts gameplay, appears before the user understands what feature they are unlocking, or blocks a feature without context, the platform creates friction at the worst possible moment.

-

The check appears too late. The platform allows risky interaction first, then tries to add verification after the fact.

-

The method does not match the surface. A light gate is used where stronger proof is needed, or a heavy check is forced onto a low-risk action.

-

There is no fallback. Legitimate users fail, cannot recover, and end up in support or abandon the flow.

User backlash often has less to do with the idea of age verification itself than with poor timing. Source: Reddit

When a basic age gate stops being enough?

A basic age gate works until age stops being one decision. A gaming platform may need one check for chat, another for mature content, a fallback for disputed results, parental consent for youth accounts, and stronger proof in markets where regulation is tighter. At that point, age verification stops being a feature and becomes an identity verification system.

That shift exposes the limits of simple methods:

-

A birthdate field is easy to deploy, but easy to fake

-

A credit card check may work for some mature-content gates, but it says little about who is actually holding the controller.

-

Face biometrics is faster, but it still needs fallback, liveness, and support for edge cases.

Once those layers need to work together across multiple surfaces, full-fledged identity verification infrastructure becomes part of the product.

Regula is one example of such a broader setup. It combines facial biometrics with liveness detection, independently validated age estimation, document verification for international IDs, and a platform that connects those checks into one flow across the user journey. That gives gaming platforms a more practical way to move beyond a basic age gate without stitching together separate tools for each step.

As age verification moves from edge case to product requirement in gaming, platforms will need more than a simple gate. They will need systems that can adapt to different risks, regions, and user journeys without collapsing into friction or inconsistency.

Planning age verification beyond a birthdate field? Explore how Regula approaches age assurance for digital platforms.