TL;DR: Liveness detection helps prevent biometric fraud by acting as a live-presence filter before the business trusts a biometric sample. The most sensitive verification flows typically require active liveness, while lower-risk ones can rely on passive checks to reduce friction.

Generative AI tools have lowered the cost and effort required to produce realistic deepfakes for biometric spoofing. In some reported cases, ready-to-go synthetic identities have been offered for as little as $15.

As a result, identity verification cannot rely on selfie capture alone. For businesses that onboard users remotely, liveness verification has become a key defense against biometric fraud.

Let’s see how it works in detail.

What is liveness detection?

Liveness detection is used to determine whether a submitted biometric sample reflects live human presence. In remote onboarding and authentication, it is typically performed during selfie or short video capture. The goal of liveness detection is to reduce the success rate of spoofing attacks that rely on replayed videos, injected media, masks, deepfakes, and other fake biometric inputs.

In most identity verification contexts, liveness detection refers to face liveness checks. However, the same principle can be applied to other human characteristics as authentication factors (fingerprints, voice, etc.). The method can also be used for ID documents, where document liveness verifies that a physical ID is being presented rather than a printed or digital copy.

Where did the idea of liveness detection come from?

Long before liveness detection became a biometric security term, the underlying question was already clear: can a system distinguish a real human from an imitation? That broader idea is often linked to Alan Turing’s work. The term liveness was later used by Dorothy E. Denning in her publication to describe the need to confirm the presence of a real person during authentication.

What types of liveness detection exist?

Biometric liveness detection methods differ in how much input they require from the user. Some ask the user to follow prompts, while others analyze a selfie or video with little to no additional effort. The right choice depends on how a business balances fraud risk, user friction, and device limitations.

Active liveness detection

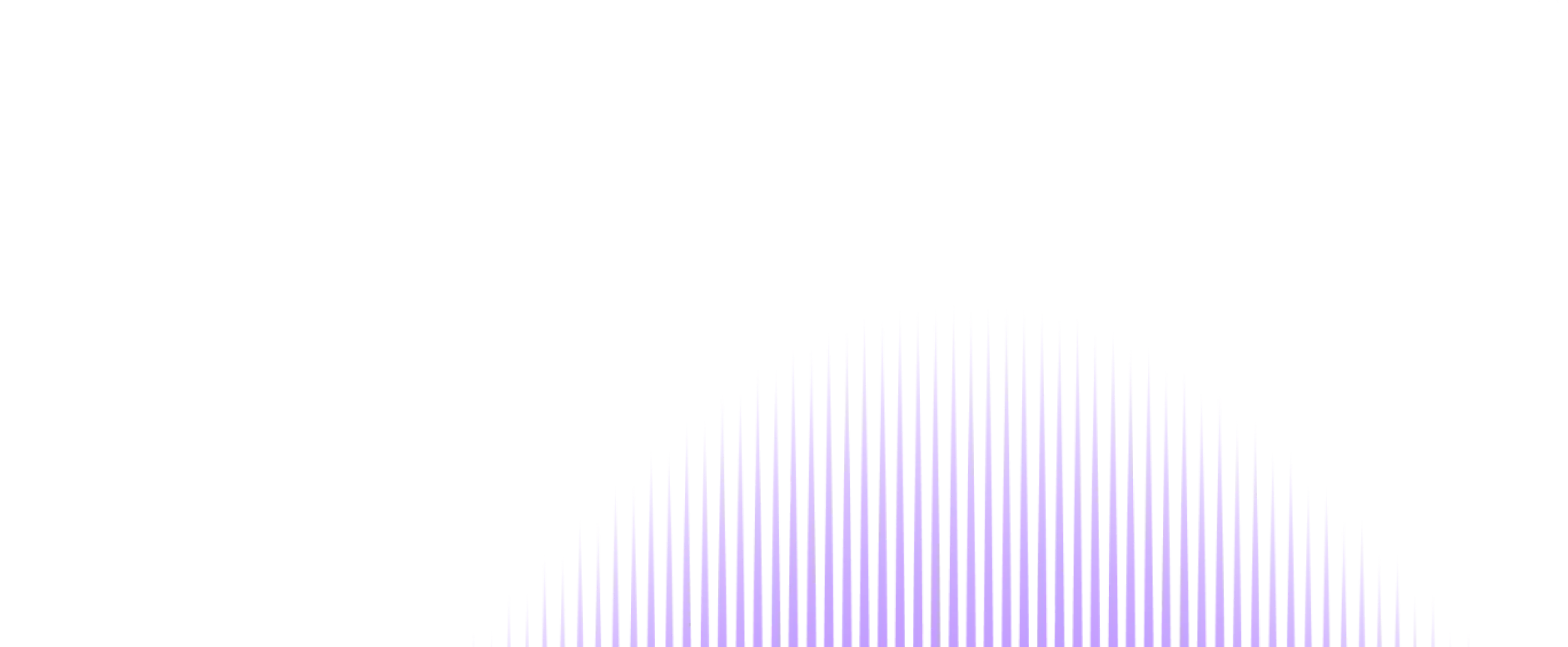

Active liveness detection is the first generation of liveness technology and is based on challenge-response interaction. It requires the user to complete one or more prompted actions during capture: turn their head, smile, or look in a specific direction.

The purpose is to make spoofing harder by checking whether the presented face can respond like a live person in real time. Because it adds challenge-response steps, active liveness detection can provide stronger anti-spoofing signals than a simple selfie flow.

The trade-off is user experience. Active checks take longer, demand more effort from a user, and may be less convenient for older users or people in poor capture conditions.

Active liveness checks are the safest of all liveness detection methods.

Passive liveness detection

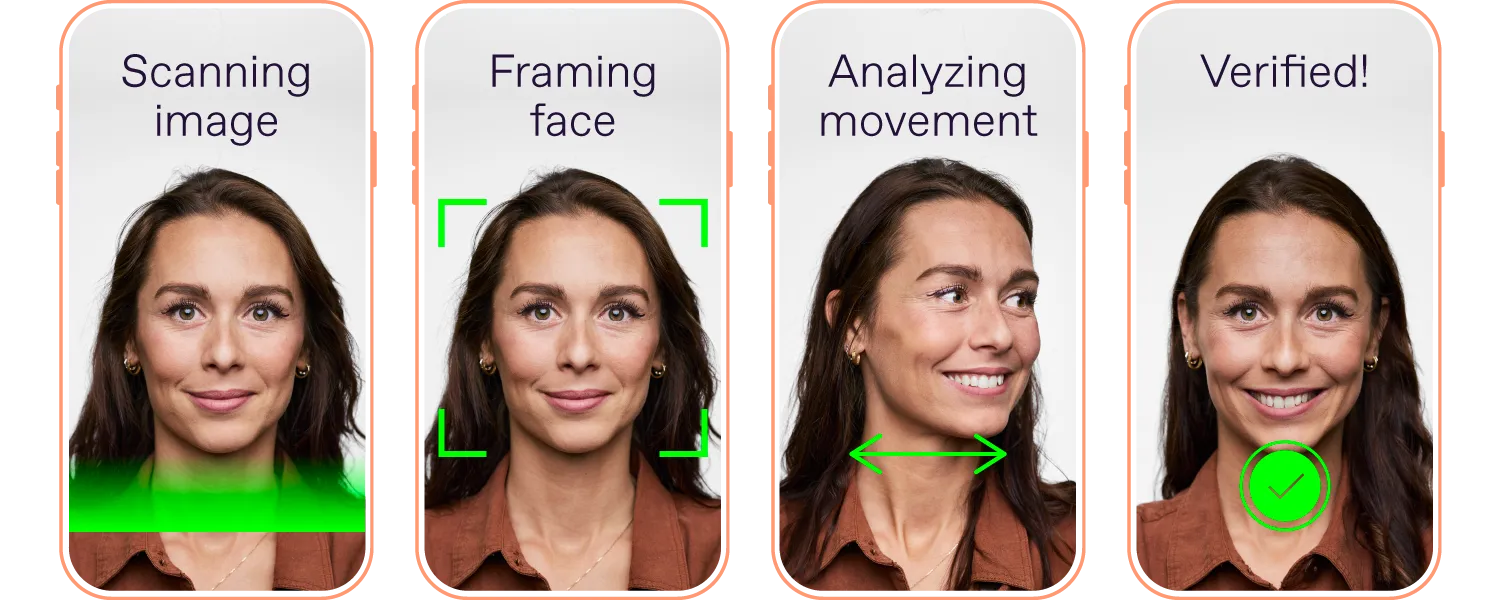

Passive liveness detection analyzes biometrics without asking the user to perform extra actions beyond standard capture. In most cases, the user simply takes a selfie. This makes passive liveness easier to complete.

Instead of relying on challenge-response prompts, passive liveness uses image and video analysis to detect cues associated with spoofing: screen artifacts, replay traces, unnatural texture, lighting inconsistencies, or other anomalies.

The trade-off is that performance depends heavily on capture quality, camera capabilities, and the power of the detection engine. In lower-quality environments, passive liveness detection may have less signal to work with than active detection.

Passive liveness checks are the easiest of all liveness detection methods for end users.

Hybrid liveness detection (also called “semi-passive liveness”)

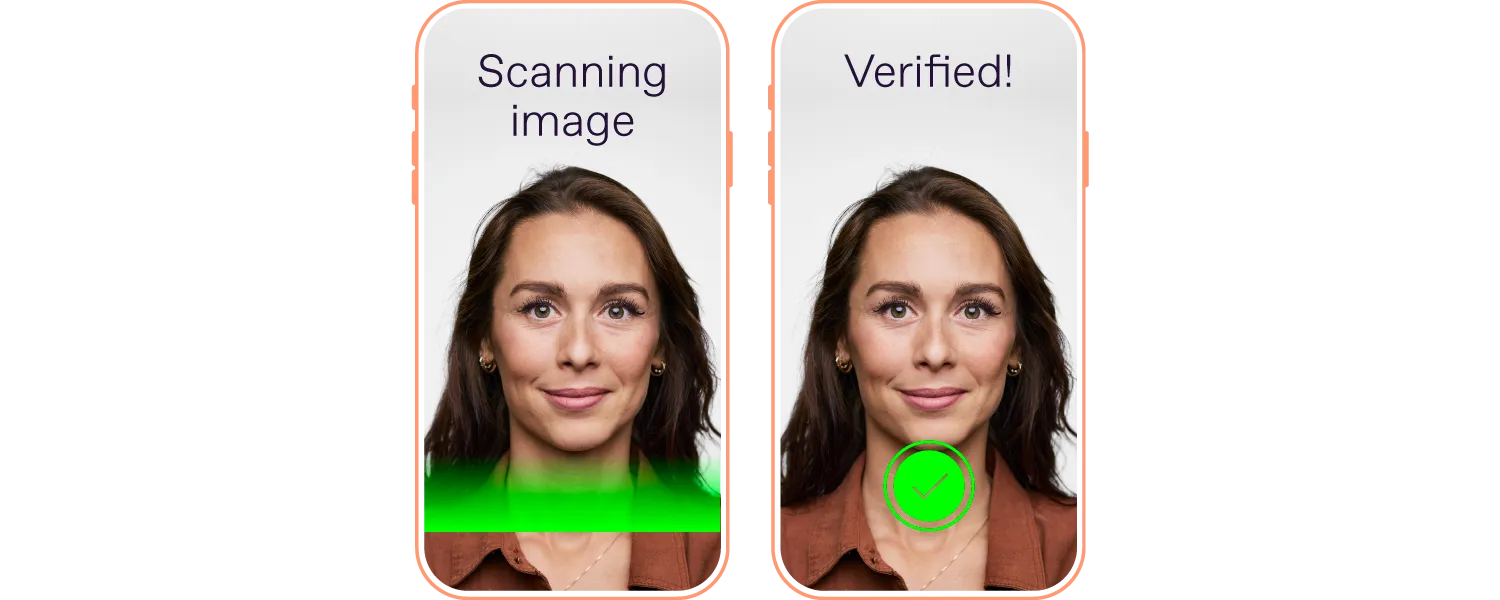

Hybrid liveness combines passive analysis with one lightweight prompted action. Unlike active liveness, where challenge-response steps form the core of the check, hybrid flows rely primarily on automated analysis of the captured sample and use a simple action as an additional signal. For example, users may need to take a selfie and then smile into the mobile camera.

The idea behind hybrid liveness is to create a verification flow that is not too disruptive for customers, yet still more secure than passive liveness.

Hybrid liveness is a middleground method, providing both security and less disruptive UX.

How does liveness detection work?

Liveness detection works by analyzing a submitted face sample for signs of live human presence and for artifacts commonly associated with spoofing attacks. To make that assessment, face liveness detection software (often delivered as a face liveness SDK) looks for visual and behavioral cues that help distinguish a real person from a fake biometric input.

Common spoofing artifacts and indicators include:

High-resolution printed photos and paper masks.

Human-like dolls, latex, silicone, or 3D masks.

Wax heads, mannequins, and other head-like artifacts.

Artificial skin tone, moiré patterns, and unusual shadows that may appear in deepfakes.

Signs of digital presentation, such as excessive screen glare or display artifacts.

Under the hood, face liveness detection algorithms are powered by neural networks trained on large volumes of real and spoofed face samples. These models learn to recognize patterns associated with synthetic or manipulated inputs and to distinguish them from natural facial characteristics.

To perform a liveness check, the system analyzes the user’s face and builds a map representing the unique properties of the face. This map can be two-dimensional (X and Y) or three-dimensional (X, Y, and Z), which are often referred to as 2D or 3D liveness, respectively.

Passive liveness often relies on 2D image analysis, which is why a selfie may be enough to capture the signals needed for evaluation. Active liveness, by contrast, is more often associated with 3D analysis, where the user is prompted to perform actions that generate additional depth (Z-axis).

2D technology is considered faster, while 3D is more secure. This is why 3D liveness is recommended for use at critical points in the customer journey, such as payment approvals. 2D technology works best for lower-risk operations like face unlock.

How to choose the right liveness detection method

Choosing passive vs. active liveness detection is not about which method is universally better. The table below compares both approaches across the criteria that matter most in real identity verification flows.

| Type of liveness detection | What drives the check | Main advantage | Main disadvantage | Typical use cases |

|---|---|---|---|---|

| Active | Prompted user actions | Highest spoof resistance | More friction, higher drop-off risk |

|

| Passive | Automated analysis of the captured sample | More user-friendly, faster completion | More dependent on capture quality and model strength |

|

| Hybrid | Automated analysis plus one lightweight prompted action | Balances assurance and usability | Still adds some friction for a user |

|

Why is liveness detection key for biometric systems?

Liveness detection is key for biometric systems because a convincing face sample does not, on its own, prove live presence. A spoofed or replayed biometric input may still appear valid unless the system can assess whether it comes from a real person in front of the camera.

The importance of biometric liveness detection is also reflected in industry standards, such as the ISO/IEC 30107 series dedicated to biometric presentation attack detection. In fact, liveness detection has already become a core safeguard in remote identity verification.

For teams evaluating the best face liveness detection tools for digital onboarding, liveness performance should be evaluated together with face matching, document verification, and integration flexibility. Regula solutions support this layered approach by helping teams build verification flows that address both biometric and document-based fraud.

If you’re designing or upgrading a remote identity verification flow, Regula can support the full verification process or complement your existing stack with missing verification components.